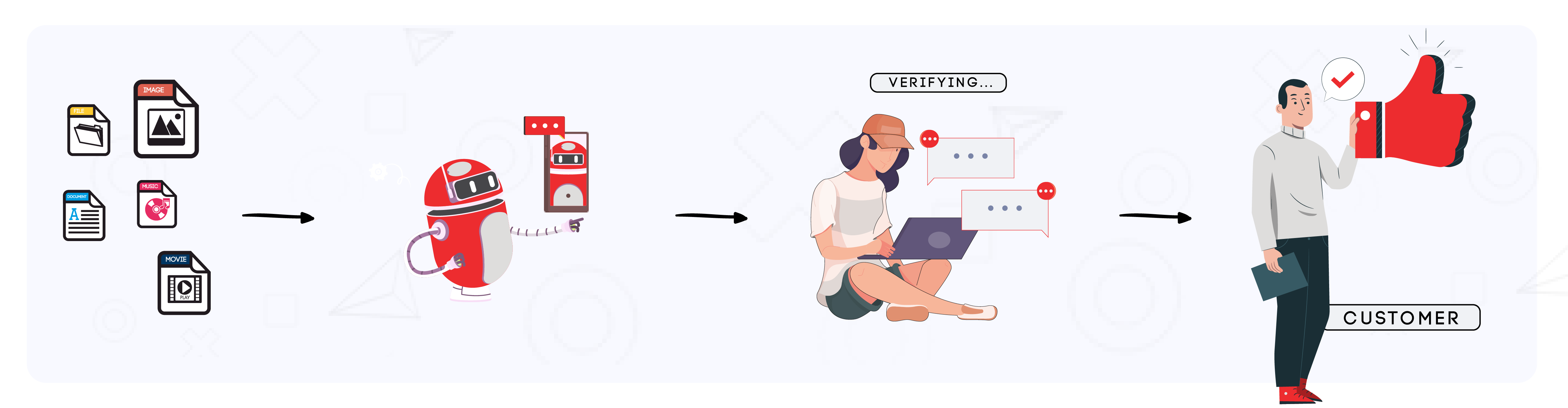

Professional Human-in-the-Loop (HITL) Services

AI Quality Assurance, Bias Mitigation & Reinforcement Learning from Human Feedback

AI is transforming industries, but human oversight remains critical to ensure accuracy, fairness, and trust.

Our professional Human-in-the-Loop (HITL) services bridge the gap between AI automation and human intelligence, making AI systems more reliable, ethical, and effective.

Trusted by leading companies worldwide for mission-critical AI quality assurance.

Why Human-in-the-Loop Services are Essential for AI

AI models depend on their training data and can produce biased, inaccurate, or misleading results without human intervention.

Our HITL services ensure AI decisions are accurate, responsible, and impactful by merging automation with human expertise and domain knowledge.

Key Benefits of Human-in-the-Loop Services

- Error Detection & Quality - Reducing AI-generated mistakes and improving accuracy

- Bias Mitigation - Ensuring AI models make fair and ethical decisions

- Complex Scenario Handling - Addressing edge cases where AI alone struggles

- Continuous Learning & Optimization - Improving AI with iterative human feedback

- Regulatory Compliance & Trust - Meeting legal and ethical AI requirements

- Reinforcement Learning from Human Feedback (RLHF) - Enhancing AI models by integrating human-preferred responses to improve decision-making

Haidata’s HITL Approach

At Haidata, we apply HITL methodologies to refine and optimize AI models for real-world applications. Our expertise covers:

AI Validation & Quality Control

Ensuring AI-generated insights align with real-world accuracy and industry needs

Bias Audits & Fairness Checks

Identifying and correcting biased AI decisions to ensure ethical AI adoption.

Expert Data Annotation

Providing high-quality, human-labeled datasets to enhance AI training and performance

AI Model Refinement

Leveraging continuous human feedback to make AI systems smarter, more adaptive, and trustworthy

Reinforcement Learning from Human Feedback (RLHF)

Training AI models with human preferences to improve alignment with real-world expectations

Human-Guided AI Decision-Making

Ensuring AI models deliver transparent and reliable outcomes by incorporating expert judgment

Case Study: AI in Sports Analytics

Challenge:

A leading sports analytics company leveraged AI to analyze match highlights and evaluate player performance. However, the AI model struggled with misclassifications, biased interpretations, and inaccuracies in key event detection.

Haidata’s HITL Solution:

- Bias Mitigation: Our expert reviewers identified and corrected inconsistencies in player rating calculations, ensuring fairness in assessments

- Data Refinement: We applied human validation to reclassify misidentified match moments, leading to more accurate analytics

- Continuous AI Enhancement: By implementing a human feedback loop, we helped retrain the AI model for improved performance over time

Results:

- 20% increase in accuracy of player performance ratings

- 35% reduction in AI misclassifications of key match events

- Enhanced trust from sports analysts and teams relying on AI-generated insights

Haidata’s HITL approach ensured the AI-driven sports analytics system delivered fair, precise, and actionable insights.

Comprehensive Human-in-the-Loop Services

AI Quality Assurance & Validation

Professional AI model validation with human expert oversight. We ensure your AI systems meet accuracy, reliability, and performance standards through rigorous testing and validation protocols.

- Model accuracy testing and validation

- Performance benchmarking against industry standards

- Edge case identification and handling

- Continuous monitoring and quality control

AI Bias Mitigation & Fairness Testing

Expert bias detection and mitigation services to ensure fair and ethical AI decision-making. We identify, analyze, and correct algorithmic biases across all demographics and use cases.

- Comprehensive bias auditing and assessment

- Fairness metrics evaluation and testing

- Bias correction and model retraining

- Ethical AI compliance verification

Reinforcement Learning from Human Feedback (RLHF)

Specialized RLHF services for training AI models with human preferences. We create human feedback loops that align AI behavior with human values and expectations.

- Human preference data collection and curation

- Reward model training and optimization

- Policy optimization with human feedback

- Constitutional AI development

Human-Guided AI Decision Making

Expert human oversight for AI systems requiring transparent and accountable decision-making. We ensure AI outputs are interpretable, reliable, and aligned with business objectives.

- Real-time human oversight implementation

- Decision transparency and explainability

- Risk assessment and mitigation protocols

- Regulatory compliance verification

Industries We Serve with HITL Solutions

Healthcare & Medical AI

Medical imaging validation, clinical decision support, diagnostic AI quality assurance, and regulatory compliance for healthcare AI systems.

Finance & Banking

Fraud detection validation, credit risk assessment, algorithmic trading oversight, and financial AI compliance and fairness testing.

Autonomous Vehicles

Safety validation, edge case handling, perception system verification, and human oversight for autonomous driving AI systems.

Legal Technology

Legal document analysis validation, contract review quality assurance, and compliance verification for legal AI applications.

Content Moderation

Social media content validation, harmful content detection, policy compliance verification, and moderation AI quality control.

AI Research & Development

Foundation model validation, LLM safety testing, AI alignment research, and experimental AI system quality assurance.

Ready to Enhance Your AI with Human Intelligence?

Get expert Human-in-the-Loop services with guaranteed accuracy improvement. Scale your AI reliability with professional HITL solutions.

Free HITL Consultation Includes:

AI model accuracy assessment

Bias detection analysis

RLHF implementation roadmap

Custom HITL solution design

Or email us directly: info@haidata.ai